Over the course of time, we have learnt a lot from our mistakes and practices while building our games. Currently, we have two major mobile applications, CallBreak and Marriage. We are happy to serve 1.3M users. In coming years, we hope to reach and connect more friends and families over card games. However, we are soon going to surprise you with games other than cards. (Interesting things on the pipeline)

As we embark on our 7th year, we would like to share our seven major learning experiences over the years.

- JavaScript -> Flow -> TypeScript

- React Hooks

- UDP Communication

- Socket Based Servers

- Monorepo to Multi-repo System

- Management Server and Bhoos CLI

- Distributed and Scalable servers

JavaScript -> Flow -> TypeScript

JavaScript has become a ubiquitous language used for building all types of applications. At Bhoos, we use it for building a wide range of applications from frontend and native apps to backend services and internal tools.

As our applications grew in complexity, we encountered challenges with updating and maintaining bug-free code. The dynamic typing nature of JavaScript made it difficult to catch type errors during development, which could only be detected at runtime, leading to crashes for our users. To address this issue, we integrated a static type checking tool into our development process. This helps catch bugs early in the development process and makes the code easier to reason about and maintain.

Initially, we used Flow by Facebook as it was already integrated in our React ecosystem.. Meanwhile, TypeScript was also gaining popularity. TypeScript added appealing features like interfaces, namespaces, decorators, and async/await along with type annotation. This, along with its larger and more active ecosystem with more libraries, tools, and integrations, led us to adopt TypeScript over Flow.

To know more about this: click here!

React Hooks

Before react hooks, we used class-based components to develop applications. We faced two major challenges. First challenge is we had to create the same logic for components that could have been reused among components. Secondly, property drilling and rendering were hard. To overcome these challenges, we used design patterns that made the code more complex to read, write, improve, maintain, and co-relocate.

With React Hooks like useContext, we could easily perform prop drilling and rendering. This makes code shorter and simple to understand. Inbuilt react hooks reduce the code complexity. Moreover, it is possible to separate the logical part so one can use it in multiple components, which reduces code duplication.

Inbuilt hooks like useState, useEffect, useRef, and useContext hooks helped make customized hooks. Custom hooks help separate concerns by extracting logic related to a particular problem. Hooks can only be logical parts, so one can reuse them on any platform. (Both mobile applications, i.e., React native and web applications, React Js).

Since hooks are units of logic it can be tested separately. Moreover, composing multiple hooks is possible. One can create multiple hooks under a hook to create or solve any complex problem and behavior.

For example, a hook that manages state and another custom hook that performs an animation.

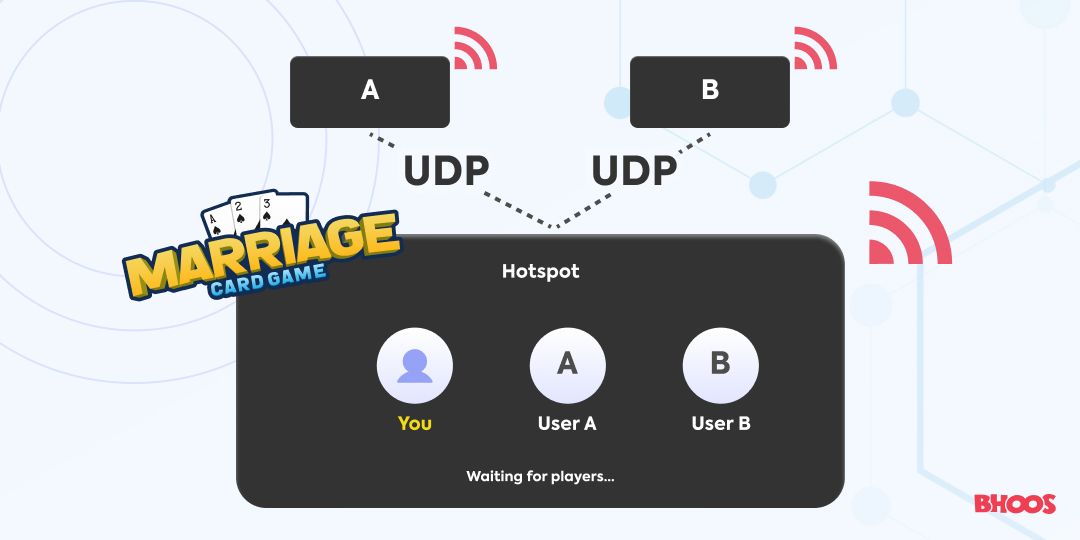

UDP Communication

At Bhoos, we usually don’t use readily available libraries and services. Instead we create something of our own that we can customize and improve as and when required. While developing games, we first thought of Websocket for our multiplayer games. However, hotspot mode within the game itself required one of the devices to act as a server. We will need to implement WebSocket Server within the mobile device itself. This would have been a huge undertaking if we were to do it ourselves and we couldn't find a decent option for mobile devices. So, our next option was using a lower level communication protocol - TCP or UDP which would allow us to write our own server with minimal features. We chose UDP because:

- It supports broadcasting on the local network, which would improve the hotspot user experience.

- It is much simpler but is an unreliable communication protocol. It was easier for us to build the reliability around UDP as we already had our game engine built using an action based state management design pattern which already incorporated sequencing and reliability within itself, supporting that over UDP was a lot easier.

- It is extremely fast and uses a lot less resources. With just 2 GB RAM and 2 vCPU we were serving around 10K concurrent users in our Marriage Game.

Socket Based Servers

We used RESTful APIs to communicate from our Call Break and Marriage games to the server. After rounds of load testing and flamegraph analyses, we encountered an issue. Express, which serves the API, was itself consuming a lot of the execution time during each API call. For common calls such as syncing data after a game has finished, the Express middleware has more overhead than the rest of the functions’ execution. This was the primary motivation behind moving towards websockets from REST.

Performance: The uWebSockets library was blazing fast. The Typescript based library demonstrates serving nearly a million open sockets from an older consumer-grade laptop. Compared to Express, the overhead added by this library was almost nonexistent.

Bidirectionality: REST is request-driven from the client side. We were using various ways to send server-initiated events. By switching to bidirectional WebSockets, we simplify a lot of such needs and bypass the requirement of using an external platform like firebase. This aligns with our philosophy of minimizing use of third-party libraries and services unless absolutely crucial.

Statefulness: REST is stateless, which seems easier to execute for most applications. However, many of our design choices led us to a backend framework that appeared stateless for individual calls. Yet, in terms of semantics, there was still somewhat of a stateful flow. For instance, you could not make other API calls without having a session, which meant login was a prerequisite for almost any other action. Of course, we could have the necessary information to execute an API in every single call, but this added yet more overhead.

Time will tell how REST to Websocket migration will play out, but we are excited for this change.

Monorepo to Multi-repo System

A monrepo (short for monolithic repository) is a repo that contains multiple projects within a single repository. A multi-repo (short for multiple repository) is a version control repository that contains separate repositories for each project.

Here are some of the advantages of multi-repo:

- It’s easier to work with a large team, since different team members can work on different repositories simultaneously without interference.

- It’s easier to deploy code, since each repository can be deployed separately.

- It’s easier to scale, since each repository can be managed and scaled independently.

Management Server and Bhoos CLI

Speed and confidence in the development and the deployment process are crucial to us. However, during development, an application can depend on multiple in-house packages, and the development involves multiple people. It would be easy to publish changes in packages to a repo with increased version number. However, due to frequent versioning, and intermediate buggy versions it might seem scary. Moreover, everyone shouldn’t have access rights to publishing packages because the intermediate buggy versions might get pulled into the next production build due to dependency specifications like: ^1.0.1. Sending updates is scary too, what if there are some neglected environment related issues? What if the application does not even start?

Deploying to production is not the end of the process. We need to put a constant check on servers and applications so they don’t crash down and are able to handle the load.

Management Server and Bhoos CLI addresses these problems. They provide mechanism to

- Publish and Host npm packages & node applications bundled with all dependencies, (i.e. like an npm registry)

- Monitor other servers, collect logs, and notify of problems in servers or errors in applications

- Gracefully update running server applications without disturbing existing connections

This improves development & deployment speed, increases confidence in deployments and allows quick rollback in case of problems.

Distributed and Scalable servers

Our backend system comprises two kinds of servers - Game API Servers and Game Engine Servers. We are concerned with high availability and fault tolerance for both of these servers.

Game API Servers

As previously mentioned, a bulk of out-of-game requests used to be handled by RESTful API servers. We are migrating towards socket-based servers. RESTful servers are quite commonly made distributed by adding a load balancer and rerouting requests. Horizontally scaling websockets is a slightly harder challenge, but still achievable by a virtual socket layer acting as a load balancer. By hitting the same cache and database, we can serve requests using multiple servers. These can be commissioned, decommissioned and upgraded as needed. By decommissioning older-version servers after completing all incoming requests and maintaining backwards-compatibility in public APIs, we are able to seamlessly upgrade our servers.

Game Engine Servers

Game engine servers are responsible for running our online multiplayer games. Rather than using a central store for the multiplayer game data, we store data only on the server where the game is running. We have rarely experienced failure cases in this scenario. Besides, this is also cost-effective.

If the load for either API or engine servers drastically increases, we can smoothly spawn new instances and use them to serve requests or handle multiplayer games. We are also experimenting with distributed database architecture. So far we haven’t faced bottlenecks in vertically scaled cache and database servers.